Is thinking about monetization a waste of our best minds?

I just recently watched this talk by Jack Conte, musician, video artist, and cofounder of Patreon:

Jack dives into how rapidly the Internet has disrupted the business of selling reproducible works, such as recorded music, investigative reporting, etc. And how important — and exciting — it is build new ways for the people who create these works to be able to make a living doing so. Of course, Jack has some particular ways of doing that in mind — such as subscriptions and subscription-like patronage of artists, such as via Patreon.

But this also made me think about this much-repeated1 quote from Jeff Hammerbacher (formerly of Facebook, Cloudera, and now doing bioinformatics research):

“The best minds of my generation are thinking about how to make people click ads. That sucks.”

I certainly agree that many other types of research can be very important and impactful, and often more so than working on data infrastructure, machine learning, market design, etc. for advertising. However, Jack Conte’s talk certainly helped make the case for me that monetization of “content” is something that has been disrupted already but needs some of the best minds to figure out new ways for creators of valuable works to make money.

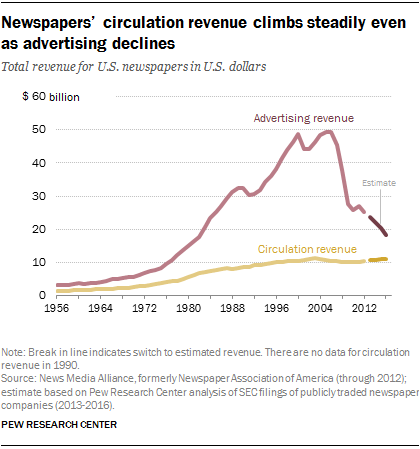

Some of this might be coming up with new arrangements altogether. But it seems like this will continue to occur partially through advertising revenue. Jack highlights how little ad revenue he often saw — even as his videos were getting millions of views. And newspapers’ have been less able to monetize online attention through advertising than they had been able to in print.

Some of this may reflect that advertising dollars were just really poorly allocated before. But improving this situation will require a mix of work on advertising — certainly beyond just getting people to click on ads — such as providing credible measurement of the effects and ROI of advertising, improving targeting of advertising, and more.

Another side of this question is that advertising remains an important part of our culture and force for attitude and behavior change. Certainly looking back on 2016 right now, many people are interested in what effects political advertising had.

So maybe it isn’t so bad if at least some of our best minds are working on online advertising.

- So often repeated that Hammerbacher said to Charlie Rose, “That’s going to be on my tombstone, I think.” [↩]

Total war, and armaments as “superior goods”

Hobsbawn on industrialization, mass mobilization, and “total war” in The Age of Extremes: A History of the World, 1914-1991 (ch. 1):

Jane Austen wrote her novels during the Napoleonic wars, but no reader who did not know this already would guess it, for the wars do not appear in her pages, even though a number of the young gentlemen who pass through them undoubtedly took part in them. It is inconceivable that any novelist could write about Britain in the twentieth-century wars in this manner.

The monster of twentieth-century total war was not born full-sized. Nevertheless, from 1914 on, wars were unmistakably mass wars. Even in the First World War Britain mobilized 12.5 per cent of its men for the forces, Germany 15.4 per cent, France almost 17 per cent. In the Second World War the percentage of the total active labour force that went into the armed forces was pretty generally in the neighborhood of 20 per cent (Milward, 1979, p. 216). We may note in passing that such a level of mass mobilization, lasting for a matter of years, cannot be maintained except by a modern high-productivity industrialized economy, and – or alternatively – an economy largely in the hands of the non-combatant parts of the population. Traditional agrarian economies cannot usually mobilize so large a proportion of their labour force except seasonally, at least in the temperate zone, for there are times in the agricultural year when all hands are needed (for instance to get in the harvest). Even in industrial societies so great a manpower mobilization puts enormous strains on the labour force, which is why modern mass wars both strengthened the powers of organized labour and produced a revolution in the employment of women outside the household: temporarily in the First World War, permanently in the Second World War.

A superior good is something that one purchases more of as income rises. Here it is appealing to, at least metaphorically, see the huge expenditures on industrial armaments as revealing arms as superior goods in this sense.

Political arithmetic: The Joy of Stats

The Joy of Stats with Hans Rosling is quite engaging — and worth watching. I really enjoyed the historical threads running through the piece. I think he’s right to emphasize how data collection by states — to understand and control their populations — is at the origin of statistics. With increasing data collection today, this is a powerful and necessary reminder of the range of ends to which data analysis can be put.

Like others, I found the scenes with Rosling behind a bubble plot made difficult by the distracting lights and windows in the background. And the ending — with analyzing “what it means to be human” — was a bit much for me. But a small complaint about a compelling view.

Ideas behind their time: formal causal inference?

Alex Tabarrok at Marginal Revolution blogs about how some ideas seem notably behind their time:

We are all familiar with ideas said to be ahead of their time, Babbage’s analytical engine and da Vinci’s helicopter are classic examples. We are also familiar with ideas “of their time,” ideas that were “in the air” and thus were often simultaneously discovered such as the telephone, calculus, evolution, and color photography. What is less commented on is the third possibility, ideas that could have been discovered much earlier but which were not, ideas behind their time.

In comparing ideas behind and ahead of their times, it’s worth considering the processes that identify them as such.

In the case of ideas ahead of their time, we rely on records and other evidence of their genesis (e.g., accounts of the use of flamethrowers at sea by the Byzantines ). Later users and re-discoverers of these ideas are then in a position to marvel at their early genesis. In trying to see whether some idea qualifies as ahead of its time, this early genesis, lack or use or underuse, followed by extensive use and development together serve as evidence for “ahead of its time” status.

On the other hand, in identifying ideas behind their time, it seems that we need different sorts of evidence. Taborrok uses the standard of whether their fruits could have been produced a long time earlier (“A lot of the papers in say experimental social psychology published today could have been written a thousand years ago so psychology is behind its time”). We need evidence that people in a previous time had all the intellectual resources to generate and see the use of the idea. Perhaps this makes identifying ideas behind their time harder or more contentious.

Y(X = x) and P(Y | do(x))

Perhaps formal causal inference — and some kind of corresponding new notation, such as Pearl’s do(x) operator or potential outcomes — is an idea behind its time.1 Judea Pearl’s account of the history of structural equation modeling seems to suggest just this: exactly what the early developers of path models (Wright, Haavelmo, Simon) needed was new notation that would have allowed them to distinguish what they were doing (making causal claims with their models) from what others were already doing (making statistical claims).2

In fact, in his recent talk at Stanford, Pearl suggested just this — that if the, say, the equality operator = had been replaced with some kind of assignment operator (say, :=), formal causal inference might have developed much earlier. We might be a lot further along in social science and applied evaluation of interventions if this had happened.

This example raises some questions about the criterion for ideas behind their time that “people in a previous time had all the intellectual resources to generate and see the use of the idea” (above). Pearl is a computer scientist by training and credits this background with his approach to causality as a problem of getting the formal language right — or moving between multiple formal languages. So we may owe this recent development to comfort with creating and evaluating the qualities of formal languages for practical purposes — a comfort found among computer scientists. Of course, e.g., philosophers and logicians also have been long comfortable with generating new formalisms. I think of Frege here.

So I’m not sure whether formal causal inference is an idea behind its time (or, if so, how far behind). But I’m glad we have it now.

- There is a “lively” debate about the relative value of these formalisms. For many of the dense causal models applicable to the social sciences (everything is potentially a confounder), potential outcomes seem like a good fit. But they can become awkward as the causal models get complex, with many exclusion restrictions (i.e. missing edges). [↩]

- See chapter 5 of Pearl, J. (2009). Causality: Models, Reasoning and Inference. 2nd Ed. Cambridge University Press. [↩]