New research on social media during the 2020 election, and my predictions

This is crossposted from Statistical Modeling, Causal Inference, and Social Science.

Back in 2020, leading academics and researchers at the company now known as Meta put together a large project to study social media and the 2020 US elections — particularly the roles of Instagram and Facebook. As Sinan Aral and I had written about how many paths for understanding effects of social media in elections could require new interventions and/or platform cooperation, this seemed like an important development. Originally the idea was for this work to be published in 2021, but there have been some delays, including simply because some of the data collection was extended as what one might call “election-related events” continued beyond November and into 2021. As of 2pm Eastern today, the news embargo for this work has been lifted on the first group of research papers.

I had heard about this project back a long time ago and, frankly, had largely forgotten about it. But this past Saturday, I was participating in the SSRC Workshop on the Economics of Social Media and one session was dedicated to results-free presentations about this project, including the setup of the institutions involved and the design of the research. The organizers informally polled us with qualitative questions about some of the results. This intrigued me. I had recently reviewed an unrelated paper that included survey data from experts and laypeople about their expectations about the effects estimated in a field experiment, and I thought this data was helpful for contextualizing what “we” learned from that study.

So I thought it might be useful, at least for myself, to spend some time eliciting my own expectations about the quantities I understood would be reported in these papers. I’ve mainly kept up with the academic and grey literature, I’d previously worked in the industry, and I’d reviewed some of this for my Senate testimony back in 2021. Along the way, I tried to articulate where my expectations and remaining uncertainty were coming from. I composed many of my thoughts on my phone Monday while taking the subway to and from the storage unit I was revisiting and then emptying in Brooklyn. I got a few comments from Solomon Messing and Tom Cunningham, and then uploaded my notes to OSF and posted a cheeky tweet.

Since then, starting yesterday, I’ve spoken with journalists and gotten to view the main text of papers for two of the randomized interventions for which I made predictions. These evaluated effects of (a) switching Facebook and Instagram users to a (reverse) chronological feed, (b) removing “reshares” from Facebook users’ feeds, and (c) downranking content by “like-minded” users, Pages, and Groups.

My guesses

My main expectations for those three interventions could be summed up as follows. These interventions, especially chronological ranking, would each reduce engagement with Facebook or Instagram. This makes sense if you think the status quo is somewhat-well optimized for showing engaging and relevant content. So some of the rest of the effects — on, e.g., polarization, news knowledge, and voter turnout — could be partially inferred from that decrease in use. This would point to reductions in news knowledge, issue polarization (or coherence/consistency), and small decreases in turnout, especially for chronological ranking. This is because people get some hard news and political commentary they wouldn’t have otherwise from social media. These reduced-engagement-driven effects should be weakest for the “soft” intervention of downranking some sources, since content predicted to be particularly relevant will still make it into users’ feeds.

Besides just reducing Facebook use (and everything that goes with that), I also expected swapping out feed ranking for reverse chron would expose users to more content from non-friends via, e.g., Groups, including large increases in untrustworthy content that would normally rank poorly. I expected some of the same would happen from removing reshares, which I expected would make up over 20% of views under the status quo, and so would be filled in by more Groups content. For downranking sources with the same estimated ideology, I expected this would reduce exposure to political content, as much of the non-same-ideology posts will be by sources with estimated ideology in the middle of the range, i.e. [0.4, 0.6], which are less likely to be posting politics and hard news. I’ll also note that much of my uncertainty about how chronological ranking would perform was because there were a lot of unknown but important “details” about implementation, such as exactly how much of the ranking system really gets turned off (e.g., how much likely spam/scam content still gets filtered out in an early stage?).

How’d I do?

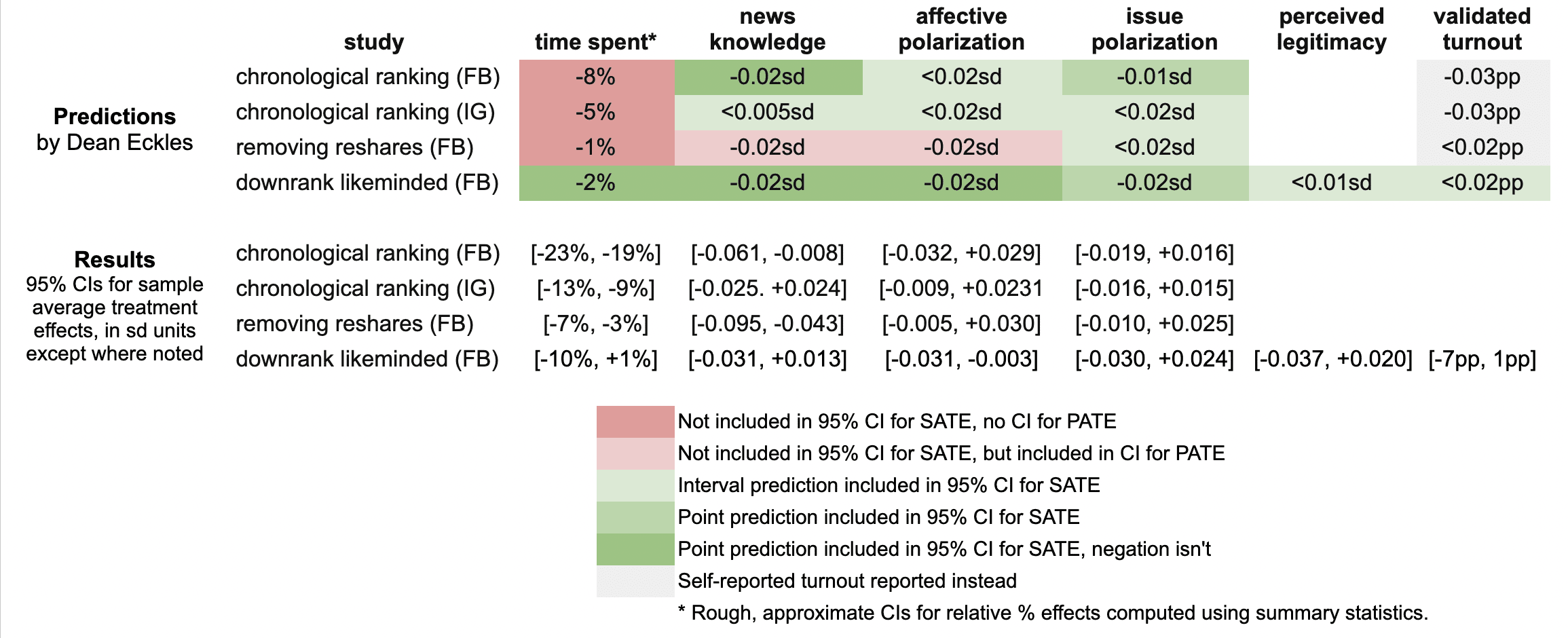

Here’s a quick summary of my guesses and the results in these three papers:

It looks like I was wrong in that the reductions in engagement were larger than I predicted: e.g., chronological ranking reduced time spent on Facebook by 21%, rather than the 8% I guessed, which was based on my background knowledge, a leaked report on a Facebook experiment, and this published experiment from Twitter.

Ex post I hypothesize that this is because of the duration of these experiments allowed for continual declines in use over months, with various feedback loops (e.g., users with chronological feed log in less, so they post less, so they get fewer likes and comments, so they log in even less and post even less). As I dig into the 100s of pages of supplementary materials, I’ll be looking to understand what these declines looked like at earlier points in the experiment, such as by election day.

My estimates for the survey-based outcomes of primary interest, such as polarization, were mainly covered by the 95% confidence intervals, with the exception of two outcomes from the “no reshares” intervention.

One thing is that all these papers report weighted estimates for a broader population of US users (population average treatment effects, PATEs), which are less precise than the unweighted (sample average treatment effect, SATE) results. Here I focus mainly on the unweighted results, as I did not know there was going to be any weighting and these are also the more narrow, and thus riskier, CIs for me. (There seems to have been some mismatch between the outcomes listed in the talk I saw and what’s in the papers, so I didn’t make predictions for some reported primary outcomes and some outcomes I made predictions for don’t seem to be reported, or I haven’t found them in the supplements yet.)

Now is a good time to note that I basically predicted what psychologists armed with Jacob Cohen’s rules of thumb might call extrapolate to “minuscule” effect sizes. All my predictions for survey-based outcomes were 0.02 standard deviations or smaller. (Recall Cohen’s rules of thumb say 0.1 is small, 0.5 medium, and 0.8 large.)

Nearly all the results for these outcomes in these two papers were indistinguishable from the null (p > 0.05), with standard errors for survey outcomes at 0.01 SDs or more. This is consistent with my ex ante expectations that the experiments would face severe power problems, at least for the kind of effects I would expect. Perhaps by revealed preference, a number of other experts had different priors.

A rare p < 0.05 result is that that chronological ranking reduced news knowledge by 0.035 SDs with 95% CI [-0.061, -0.008], which includes my guess of -0.02 SDs. Removing reshares may have reduced news knowledge even more than chronological ranking — and by more than I guessed.

Even with so many null results I was still sticking my neck out a bit compared with just guessing zero everywhere, since in some cases if I had put the opposite sign my estimate wouldn’t have been in the 95% CI. For example, downranking “like-minded” sources produced a CI of [-0.031, 0.013] SDs, which includes my guess of -0.02, but not its negation. On the other hand, I got some of these wrong, where I guessed removing reshares would reduce affective polarization, but a 0.02 SD effect is outside the resulting [-0.005, +0.030] interval.

It was actually quite a bit of work to compare my predictions to the results because I didn’t really know a lot of key details about exact analyses and reporting choices, which strikingly even differ a bit across these three papers. So I might yet find more places where I can, with a lot of reading and a bit of arithmetic, figure out where else I may have been wrong. (Feel free to point these out.)

Further reflections

I hope that this helps to contextualize the present results with expert consensus — or at least my idiosyncratic expectations. I’ll likely write a bit more about these new papers and further work released as part of this project.

It was probably an oversight for me not to make any predictions about the observational paper looking at polarization in exposure and consumption of news media. I felt like I had a better handle on thinking about simple treatment effects than these measures, but perhaps that was all the more reason to make predictions. Furthermore, given the limited precision of the experiments’ estimates, perhaps it would have been more informative (and riskier) to make point predictions about these precisely estimated observational quantities.

[I want to note that I was an employee or contractor of Facebook (now Meta) from 2010 through 2017. I have received funding for other research from Meta, Meta has sponsored a conference I organize, and I have coauthored with Meta employees as recently as earlier this month. I was also recently a consultant to Twitter, ending shortly after the Musk acquisition. You can find all my disclosures here.]

Using covariates to increase the precision of randomized experiments

A simple difference-in-means estimator of the average treatment effect (ATE) from a randomized experiment is, being unbiased, a good start, but may often leave a lot of additional precision on the table. Even if you haven’t used covariates (pre-treatment variables observed for your units) in the design of the experiment (e.g., this is often difficult to do in streaming random assignment in Internet experiments; see our paper), you can use them to increase the precision of your estimates in the analysis phase. Here are some simple ways to do that. I’m not including a whole range of more sophisticated/complicated approaches. And, of course, if you don’t have any covariates for the units in your experiments — or they aren’t very predictive of your outcome, this all won’t help you much.

Post-stratification

Prior to the experiment you could do stratified randomization (i.e. blocking) according to some categorical covariate (making sure that there there are same number of, e.g., each gender, country, paid/free accounts in each treatment). But you can also do something similar after: compute an ATE within each stratum and then combine the strata-level estimates, weighting by the total number of observations in each stratum. For details — and proofs showing this often won’t be much worse than blocking, consult Miratrix, Sekhon & Yu (2013).

Regression adjustment with a single covariate

Often what you most want to adjust for is a single numeric covariate,1 such as a lagged version of your outcome (i.e., your outcome from some convenient period before treatment). You can simply use ordinary least squares regression to adjust for this covariate by regressing your outcome on both a treatment indicator and the covariate. Even better (particularly if treatment and control are different sized by design), you should regress your outcome on: a treatment indicator, the covariate centered such that it has mean zero, and the product of the two.2 Asymptotically (and usually in practice with a reasonably sized experiment), this will increase precision and it is pretty easy to do. For more on this, see Lin (2012).

Higher-dimensional adjustment

If you have a lot more covariates to adjust for, you may want to use some kind of penalized regression. For example, you could use the Lasso (L1-penalized regression); see Bloniarz et al. (2016).

Use out-of-sample predictions from any model

Maybe you instead want to use neural nets, trees, or an ensemble of a bunch of models? That’s fine, but if you want to be able to do valid statistical inference (i.e., get 95% confidence intervals that actually cover 95% of the time), you have to be careful. The easiest way to be careful in many Internet industry settings is just to use historical data to train the model and then get out-of-sample predictions Yhat from that model for your present experiment. You then then just subtract Yhat from Y and use the simple difference-in-means estimator. Aronow and Middleton (2013) provide some technical details and extensions. A simple extension that makes this more robust to changes over time is to use this out-of-sample Yhat as a covariate, as described above.3

- As Winston Lin notes in the comments and as is implicit in my comparison with post-stratification, as long as the number of covariates is small and not growing with sample size, the same asymptotic results apply. [↩]

- Note that if the covariate is binary or, more generally, categorical, then this exactly coincides with the post-stratified estimator considered above. [↩]

- I added this sentence in response to Winston Lin’s comment. [↩]

A deluge of experiments

The Atlantic reports on the data deluge and its value for innovation.1 I particularly liked how Erik Brynjolfsson and Andrew McAfee, who wrote the Atlantic piece, highlight the value of experimentation for addressing causal questions — and that many of the questions we care about are causal.2

In writing about experimentation, they report that Hal Varian, Google’s Chief Economist, estimates that Google runs “100-200 experiments on any given day”. This struck me as incredibly low! I would have guessed more like 10,000 or maybe more like 100,000.

The trick of course is how one individuates experiments. Say Google has an automatic procedure whereby each ad has a (small) random set of users who are prevented from seeing it and are shown the next best ad instead. Is this one giant experiment? Or one experiment for each ad?

This is a bit of a silly question.3

But when most people — even statisticians and scientists — think of an experiment in this context, they think of something like Google or Amazon making a particular button bigger. (Maybe somebody thought making that button bigger would improve a particular metric.) They likely don’t think of automatically generating an experiment for every button, such that a random sample see that particular button slightly bigger. It’s these latter kinds of procedures that lead to thinking about tens of thousands of experiments.

That’s the real deluge of experiments.

- I don’t know that I would call much of it ‘innovation’. There is some outright innovation, but a lot of that is in the general strategies for using the data. There is much more gained in minor tweaking and optimization of products and services. [↩]

- Perhaps they even overstate the power of simple experiments. For example, they do not mention the fact that many times the results these kinds of experiments often change over time, so that what you learned 2 months ago is no longer true. [↩]

- Note that two single-factor experiments over the same population with independent random assignment can be regarded as a single experiment with two factors. [↩]

Marginal evidence for psychological processes

Some comments on problems with investigating psychological processes using estimates of average (i.e. marginal) effects. Hence the play on words in the title.

Social psychology makes a lot of being theoretical. This generally means not just demonstrating an effect, but providing evidence about the psychological processes that produce it. Psychological processes are, it is agreed, intra-individual processes. To tell a story about a psychological process is to posit something going on “inside” people. It is quite reasonable that this is how social psychology should work — and it makes it consistent with much of cognitive psychology as well.

But the evidence that social psychology uses to support these theories about these intra-individual processes is largely evidence about effects of experimental conditions (or, worse, non-manipulated measures) averaged across many participants. That is, it is using estimates of marginal effects as evidence of conditional effects. This is intuitively problematic. Now, there is no problem when using experiments to study effects and processes that are homogenous in the population. But, of course, they aren’t: heterogeneity abounds. There is variation in how factors affect different people. This is why the causal inference literature has emphasized the differences among the average treatment effect, (average) treatment effect on the treated, local average treatment effect, etc.

Not only is this disconnect between marginal evidence and conditional theory trouble in the abstract, we know it has already produced many problems in the social psychology literature.1 Baron and Kenny (1986) is the most cited paper published in the Journal of Personality and Social Psychology, the leading journal in the field. It paints an rosy picture of what it is like to investigate psychological processes. The methods of analysis it proposes for investigating processes are almost ubiquitous in social psych.2 The trouble is that this approach is severely biased in the face of heterogeneity in the processes under study. This is usually described as problem of correlated error terms, omitted-variables bias, or adjusting for post-treatment variables. This is all true. But, in the most common uses, it is perhaps more natural to think of it as a problem of mixing up marginal (i.e. average) and conditional effects.3

What’s the solution? First, it is worth saying that average effects are worth investigating! Especially if you are evaluating a intervention or drug that might really be used — or if you are working at another level of analysis than psychology. But if psychological processes are your thing, you must do better.

Social psychologists sometimes do condition on individual characteristics, but often this is a measure of a single trait (e.g., need for cognition) that cannot plausibly exhaust all (or even much) of the heterogeneity in the effects under study. Without much larger studies, they cannot condition on more characteristics because of estimation problems (too many parameters for their N). So there is bound to be substantial heterogeneity.

Beyond this, I think social psychology could benefit from a lot more within-subjects experiments. Modern statistical computing (e.g., tools for fitting mixed-effects or multilevel models) makes it possible — even easy — to use such data to estimate effects of the manipulated factors for each participant. If they want to make credible claims about processes, then within-subjects designs — likely with many measurements of each person — are a good direction to more thoroughly explore.

- The situation is bad enough that I (and some colleagues) certainly don’t even take many results in social psych as more than providing a possibly interesting vocabulary. [↩]

- Luckily, my sense is that they are waning a bit, partially because of illustrations of the method’s bias. [↩]

- To translate to the terms used before, note that we want to condition on unobserved (latent) heterogeneity. If one doesn’t, then there is omitted variable bias. This can be done with models designed for this purpose, such as random effects models. [↩]

Applying social psychology

Some reflections on how “quantitative” social psychology is and how this matters for its application to design and decision-making — especially in industries touched by the Internet.

In many ways, contemporary social psychology is dogmatically quantitative. Investigators run experiments, measure quantitative outcomes (even coding free responses to make them amenable to analysis), and use statistics to characterize the collected data. On the other hand, social psychology’s processes of stating and integrating its conclusions remain largely qualitative. Many hypotheses in social psychology state that some factor affects a process or outcome in one direction (i.e., “call” either beta > 0 or beta < 0). Reviews of research in social psychology often start with a simple effect and then note how many other variables moderate this effect. This is all quite fitting with the dominance of null-hypothesis significance testing (NHST) in much of psychology: rather than producing point estimates or confidence intervals for causal effects, it is enough to simply see how likely the observed data is given there there is no effect.1 Of course, there have been many efforts to change this. Many journals require reporting effect sizes. This is a good thing, but these effect sizes are rarely predicted by social psychological theory. Rather, they are reported to aid judgments of whether a finding is not only statistically significant but substantively or practically significant, and the theory predicts the direction of the effect.

Not only is this process of reporting and combining results not quantitative in many ways, but it requires substantial inference from the particular settings of conducted experiments to the present settings. This actually helps to make sense of the practices described above: many social psychology experiments are conducted in conditions and with populations that are so different from those in which people would like to apply the resulting theories, that expecting consistency of effect sizes is implausible.2 This is not to say that these studies cannot tell us a good deal about how people will behave in many circumstances. It's just that figuring out what they predict and whether these predictions are reliable is a very messy, qualitative process.

Thus, when it comes to making decisions -- about a policy, intervention, or service -- based on social-psychological research, this process is largely qualitative. Decision-makers can ask, which effects are in play? What is their direction? With interventions and measurement that are very likely different from the present case, how large were the effects?3

Sometimes this is the best that social science can provide. And such answers can be quite useful in design. The results of psychology experiments can often be very effective when used generatively. For example, designers can use taxonomies of persuasive strategies to dream up some ways of producing desired behavior change.

Nonetheless, I think all this can be contrasted with some alternative practices that are both more quantitative and require less of this uneasy generalization. First, social scientists can give much more attention to point estimates of parameters. While not without its (other) flaws, the economics literature on financial returns to education has aimed to provide, criticize, and refine estimates of just how much wages increase (on average) with more education.4

Second, researchers can avoid much of the messiest kinds of generalization altogether. Within the Internet industry, product optimization experiments are ubiquitous. Google, Yahoo, Facebook, Microsoft, and many others are running hundreds to thousands of simultaneous experiments with parts of their services. This greatly simplifies generalization: the exact intervention under consideration has just been tried with a random sample from the very population it will be applied to. If someone wants to tweak the intervention, just try it again before launching. This process still involves human judgment about how to react to these results.5 An even more extreme alternative is when machine learning is used to fine-tune, e.g., recommendations without direct involvement (or understanding) by humans.

So am I saying that social psychology — at least as an enterprise that is useful to designers and decision-makers — is going to be replaced by simple “bake-off” experiments and machine learning? Not quite. Unlike product managers at Google, many decision-makers don’t have the ability to cheaply test a proposed intervention on their population of interest.6 Even at Google, many changes (or new products) under consideration are too difficult to build to them all: one has to decide among an overabundance of options before the most directly applicable data could be available. This is consistent with my note above that social-psychological findings can make excellent inspiration during idea generation and early evaluation.

- To parrot Andrew Gelman, in social phenomena, everything affects everything else. There are no betas that are exactly zero. [↩]

- It's also often implausible that the direction of the effect must be preserved. [↩]

- Major figures in social psychology, such as Lee Ross, have worked on trying to better anticipate the effects of social interventions from theory. It isn’t easy. [↩]

- The diversity of the manipulations used by social psychologists ostensibly studying the same thing can make this more difficult. [↩]

- Generalization is not avoided. In particular, decision-makers often have to consider what would happen if an intervention tested with 1% of the population is launched for the whole population. There are all kinds of issues relating to peer influence, network effects, congestion, etc., here that don’t allow for simple extrapolation from the treatment effects identified by the experiment. Nonetheless, these challenges obviously apply to most research that aims to predict the effects of causes. [↩]

- However, Internet services play a more and more central role in many parts of our life, so this doesn’t just have to be limited to the Internet industry itself. [↩]