Selecting effective means to any end

How are psychographic personalization and persuasion profiling different from more familiar forms of personalization and recommendation systems? A big difference is that they focus on selecting the “how” or the “means” of inducing you to an action — rather than selecting the “what” or the “ends”. Given the recent interest in this kind of personalization, I wanted to highlight some excerpts from something Maurits Kaptein and I wrote in 2010.1

This post excerpts our 2010 article, a version of which was published as:

Kaptein, M., & Eckles, D. (2010). Selecting effective means to any end: Futures and ethics of persuasion profiling. In International Conference on Persuasive Technology (pp. 82-93). Springer Lecture Notes in Computer Science.

For more on this topic, see these papers.

We distinguish between those adaptive persuasive technologies that adapt the particular ends they try to bring about and those that adapt their means to some end.

First, there are systems that use models of individual users to select particular ends that are instantiations of more general target behaviors. If the more general target behavior is book buying, then such a system may select which specific books to present.

Second, adaptive persuasive technologies that change their means adapt the persuasive strategy that is used — independent of the end goal. One could offer the same book and for some people show the message that the book is recommended by experts, while for others emphasizing that the book is almost out of stock. Both messages be may true, but the effect of each differs between users.

Example 2. Ends adaptation in recommender systems

Pandora is a popular music service that tries to engage music listeners and persuade them into spending more time on the site and, ultimately, subscribe. For both goals it is beneficial for Pandora if users enjoy the music that is presented to them by achieving a match between the music offering to individual, potentially latent music preferences. In doing so, Pandora adaptively selects the end — the actual song that is listened to and that could be purchased, rather than the means — the reasons presented for the selection of one specific song.

The distinction between end-adaptive persuasive technologies and means-adaptive persuasive technologies is important to discuss since adaptation in the latter case could be domain independent. In end adaptation, we can expect that little of the knowledge of the user that is gained by the system can be used in other domains (e.g. book preferences are likely minimally related to optimally specifying goals in a mobile exercise coach). Means adaptation is potentially quite the opposite. If an agent expects that a person is more responsive to authority claims than to other influence strategies in one domain, it may well be that authority claims are also more effective for that user than other strategies in a different domain. While we focus on novel means-adaptive systems, it is actually quite common for human influence agents adaptively select their means.

Influence Strategies and Implementations

Means-adaptive systems select different means by which to bring about some attitude or behavior change. The distinction between adapting means and ends is an abstract and heuristic one, so it will be helpful to describe one particular way to think about means in persuasive technologies. One way to individuate means of attitude and behavior change is to identify distinct influence strategies, each of which can have many implementations. Investigators studying persuasion and compliance-gaining have varied in how they individuate influence strategies: Cialdini [5] elaborates on six strategies at length, Fogg [8] describes 40 strategies under a more general definition of persuasion, and others have listed over 100 [16].

Despite this variation in their individuation, influence strategies are a useful level of analysis that helps to group and distinguish specific influence tactics. In the context of means adaptation, human and computer persuaders can select influence strategies they expect to be more effective that other influence strategies. In particular, the effectiveness of a strategy can vary with attitude and behavior change goals. Different influence strategies are most effective in different stages of the attitude to behavior continuum [1]. These range from use of heuristics in the attitude stage to use of conditioning when a behavioral change has been established and needs to be maintained [11]. Fogg [10] further illustrates this complexity and the importance of considering variation in target behaviors by presenting a two-dimensional matrix of 35 classes behavior change that vary by (1) the schedule of change (e.g., one time, on cue) and (2) the type of change (e.g., perform new behavior vs. familiar behavior). So even for persuasive technologies that do not adapt to individuals, selecting an influence strategy — the means — is important. We additionally contend that influence strategies are also a useful way to represent individual differences [9] — differences which may be large enough that strategies that are effective on average have negative effects for some people.

Example 4. Backfiring of influence strategies

John just subscribed to a digital workout coaching service. This system measures his activity using an accelerometer and provides John feedback through a Web site. This feedback is accompanied by recommendations from a general practitioner to modify his workout regime. John has all through his life been known as authority averse and dislikes the top-down recommendation style used. After three weeks using the service, John’s exercise levels have decreased.

Persuasion Profiles

When systems represent individual differences as variation in responses to influence strategies — and adapt to these differences, they are engaging in persuasion profiling. Persuasion profiles are thus collections of expected effects of different influence strategies for a specific individual. Hence, an individual’s persuasion profile indicates which influence strategies — one way of individuating means of attitude and behavior change — are expected to be most effective.

Persuasion profiles can be based on demographic, personality, and behavioral data. Relying primarily on behavioral data has recently become a realistic option for interactive technologies, since vast amounts of data about individuals’ behavior in response to attempts at persuasion are currently collected. These data describe how people have responded to presentations of certain products (e.g. e-commerce) or have complied to requests by persuasive technologies (e.g. the DirectLife Activity Monitor [12]).

Existing systems record responses to particular messages — implementations of one or more influence strategies — to aid profiling. For example, Rapleaf uses responses by a users’ friends to particular advertisements to select the message to present to that user [2]. If influence attempts are identified as being implementations of particular strategies, then such systems can “borrow strength” in predicting responses to other implementations of the same strategy or related strategies. Many of these scenarios also involve the collection of personally identifiable information, so persuasion profiles can be associated with individuals across different sessions and services.

Consequences of Means Adaptation

In the remainder of this paper we will focus on the implications of the usage of persuasion profiles in means-adaptive persuasive systems. There are two properties of these systems which make this discussion important:

1. End-independence: Contrary to profiles used by end-adaptive persuasive sys- tems the knowledge gained about people in means-adaptive systems can be used independent from the end goal. Hence, persuasion profiles can be used independent of context and can be exchanged between systems.

2. Undisclosed: While the adaptation in end-adaptive persuasive systems is often most effective when disclosed to the user, this is not necessarily the case in means-adaptive persuasive systems powered by persuasion profiles. Selecting a different influence strategy is likely less salient than changing a target behavior and thus will often not be noticed by users.

Although through the previous examples and the discussion of adaptive persuasive systems these two notions have already been hinted upon, we feel it is important to examine each in more detail.

End-Independence

Means-adaptive persuasive technologies are distinctive in their end-independence: a persuasion profile created in one context can be applied to bringing about other ends in that same context or to behavior or attitude change in a quite different context. This feature of persuasion profiling is best illustrated by contrast with end adaptation.

Any adaptation that selects the particular end (or goal) of a persuasive attempt is inherently context-specific. Though there may be associations between individual differences across context (e.g., between book preferences and political attitudes) these associations are themselves specific to pairs of contexts. On the other hand, persuasion profiles are designed and expected to be independent of particular ends and contexts. For example, we propose that a person’s tendency to comply more to appeals by experts than to those by friends is present both when looking at compliance to a medical regime as well as purchase decisions.

It is important to clarify exactly what is required for end-independence to obtain. If we say that a persuasion profile is end-independent than this does not imply that the effectiveness of influence strategies is constant across all contexts. Consistent with the results reviewed in section 3, we acknowledge that influence strategy effectiveness depends on, e.g., the type of behavior change. That is, we expect that the most effective influence strategy for a system to employ, even given the user’s persuasion profile, would depend on both context and target behavior. Instead, end-independence requires that the difference between the average effect of a strategy for the population and the effect of that strategy for a specific individual is relatively consistent across contexts and ends.

Implications of end-independence.

From end-independence, it follows that persuasion profiles could potentially be created by, and shared with, a number of systems that use and modify these profiles. For example, the profile constructed from observing a user’s online shopping behavior can be of use in increasing compliance in saving energy. Behavioral measures in latter two contexts can contribute to refining the existing profile.2

Not only could persuasion profiles be used across contexts within a single organization, but there is the option of exchanging the persuasion profiles between corporations, governments, other institutions, and individuals. A market for persuasion profiles could develop [9], as currently exists for other data about consumers. Even if a system that implements persuasion profiling does so ethically, once constructed the profiles can be used for ends not anticipated by its designers.

Persuasion profiles are another kind of information about individuals collected by corporations that individuals may or have effective access to. This raises issues of data ownership. Do individuals have access to their complete persuasion profiles or other indicators of the contents of the profiles? Are individuals compensated for this valuable information [14]? If an individual wants to use Amazon’s persuasion profile to jump-start a mobile exercise coach’s adaptation, there may or may not be technical and/or legal mechanisms to obtain and transfer this profile.

Non-disclosure

Means-adaptive persuasive systems are able and likely to not disclose that they are adapting to individuals. This can be contrasted with end adaptation, in which it is often advantageous for the agent to disclose the adaption and potentially easy to detect. For example, when Amazon recommends books for an individual it makes clear that these are personalized recommendations — thus benefiting from effects of apparent personalization and enabling presenting reasons why these books were recommended. In contrast, with means adaptation, not only may the results of the adaptation be less visible to users (e.g. emphasizing either “Pulitzer Prize winning” or “International bestseller”), but disclosure of the adaptation may reduce the target attitude or behavior change.

It is hypothesized that the effectiveness of social influence strategies is, at least partly, caused by automatic processes. According to dual-process models [4], un- der low elaboration message variables manipulated in the selection of influence strategies lead to compliance without much thought. These dual-process models distinguish between central (or systematic) processing, which is characterized by elaboration on and consideration of the merits of presented arguments, and pe- ripheral (or heuristic) processing, which is characterized by responses to cues as- sociated with, but peripheral to the central arguments of, the advocacy through the application of simple, cognitively “cheap”, but fallible rules [13]. Disclosure of means adaptation may increase elaboration on the implementations of the selected influence strategies, decreasing their effectiveness if they operate primarily via heuristic processing. More generally, disclosure of means adaptation is a disclosure of persuasive intent, which can increase elaboration and resistance to persuasion.

Implications of non-disclosure. The fact that persuasion profiles can be obtained and used without disclosing this to users is potentially a cause for concern. Potential reductions in effectiveness upon disclosure incentivize system designs to avoid disclosure of means adaptation.

Non-disclosure of means adaptation may have additional implications when combined with value being placed on the construction of an accurate persuasion profile. This requires some explanation. A simple system engaged in persuasion profiling could select influence strategies and implementations based on which is estimated to have the largest effect in the present case; the model would thus be engaged in passive learning. However, we anticipate that systems will take a more complex approach, employing active learning techniques [e.g., 6]. In active learning the actions selected by the system (e.g., the selection of the influence strategy and its implementation) are chosen not only based on the value of any resulting attitude or behavior change but including the value predicted improvements to the model resulting from observing the individual’s response. Increased precision, generality, or comprehensiveness of a persuasion profile may be valued (a) because the profile will be more effective in the present context or (b) because a more precise profile would be more effective in another context or more valuable in a market for persuasion profiles.

These later cases involve systems taking actions that are estimated to be non-optimal for their apparent goals. For example, a mobile exercise coach could present a message that is not estimated to be the most effective in increasing overall activity level in order to build a more precise, general, or comprehensive persuasion profile. Users of such a system might reasonably expect that it is designed to be effective in coaching them, but it is in fact also selecting actions for other reasons, e.g., selling precise, general, and comprehensive persuasion profiles is part of the company’s business plan. That is, if a system is designed to value constructing a persuasion profile, its behavior may differ substantially from its anticipated core behavior.

[1] Aarts, E.H.L., Markopoulos, P., Ruyter, B.E.R.: The persuasiveness of ambient intelligence. In: Petkovic, M., Jonker, W. (eds.) Security, Privacy and Trust in Modern Data Management. Springer, Heidelberg (2007)

[2] Baker, S.: Learning, and profiting, from online friendships. BusinessWeek 9(22) (May 2009)Selecting Effective Means to Any End 93

[3] Berdichevsky, D., Neunschwander, E.: Toward an ethics of persuasive technology. Commun. ACM 42(5), 51–58 (1999)

[4] Cacioppo, J.T., Petty, R.E., Kao, C.F., Rodriguez, R.: Central and peripheral routes to persuasion: An individual difference perspective. Journal of Personality and Social Psychology 51(5), 1032–1043 (1986)

[5] Cialdini, R.: Influence: Science and Practice. Allyn & Bacon, Boston (2001)

[6] Cohn,D.A., Ghahramani,Z.,Jordan,M.I.:Active learning with statistical models. Journal of Artificial Intelligence Research 4, 129–145 (1996)

[7] Eckles, D.: Redefining persuasion for a mobile world. In: Fogg, B.J., Eckles, D. (eds.) Mobile Persuasion: 20 Perspectives on the Future of Behavior Change. Stanford Captology Media, Stanford (2007)

[8] Fogg, B.J.: Persuasive Technology: Using Computers to Change What We Think and Do. Morgan Kaufmann, San Francisco (2002)

[9] Fogg, B.J.: Protecting consumers in the next tech-ade, U.S. Federal Trade Commission hearing (November 2006), http://www.ftc.gov/bcp/workshops/techade/pdfs/transcript_061107.pdf

[10] Fogg,B.J.:The behavior grid: 35 ways behavior can change. In: Proc. of Persuasive Technology 2009, p. 42. ACM, New York (2009)

[11] Kaptein, M., Aarts, E.H.L., Ruyter, B.E.R., Markopoulos, P.: Persuasion in am- bient intelligence. Journal of Ambient Intelligence and Humanized Computing 1, 43–56 (2009)

[12] Lacroix, J., Saini, P., Goris, A.: Understanding user cognitions to guide the tai- loring of persuasive technology-based physical activity interventions. In: Proc. of Persuasive Technology 2009, vol. 350, p. 9. ACM, New York (2009)

[13] Petty, R.E., Wegener, D.T.: The elaboration likelihood model: Current status and controversies. In: Chaiken, S., Trope, Y. (eds.) Dual-process theories in social psychology, pp. 41–72. Guilford Press, New York (1999)

[14] Prabhaker, P.R.: Who owns the online consumer? Journal of Consumer Market- ing 17, 158–171 (2000)

[15] Rawls, J.: The independence of moral theory. In: Proceedings and Addresses of the American Philosophical Association, vol. 48, pp. 5–22 (1974)

[16] Rhoads, K.: How many influence, persuasion, compliance tactics & strategies are there? (2007), http://www.workingpsychology.com/numbertactics.html

[17] Schafer, J.B., Konstan, J.A., Riedl, J.: E-commerce recommendation applications. Data Mining and Knowledge Discovery 5(1/2), 115–153 (2001)

- We were of course influenced by B.J. Fogg’s previous use of the term ‘persuasion profiling’, including in his comments to the Federal Trade Commission in 2006. [↩]

- This point can also be made in the language of interaction effects in analysis of variance: Persuasion profiles are estimates of person–strategy interaction effects. Thus, the end-independence of persuasion profiles requires not that the two-way strategy– context interaction effect is small, but that the three-way person–strategy–context interaction is small. [↩]

Academia vs. industry: Harvard CS vs. Google edition

Matt Welsh, a professor in the Harvard CS department, has decided to leave Harvard to continue his post-tenure leave working at Google. Welsh is obviously leaving a sweet job. In fact, it was not long ago that he was writing about how difficult it is to get tenure at Harvard.

So why is he leaving? Well, CS folks doing research in large distributed systems are in a tricky place, since the really big systems are all in industry. And instead of legions of experienced engineers to help build and study these systems, they have a bunch of lazy grad students! One might think, then, that this kind of (tenured) professor to industry move is limited to people creating and studying large deployments of computer systems.

There is a broader pull, I think. For researchers studying many central topics in the social sciences (e.g., social influence), there is a big draw to industry, since it is corporations that are collecting broad and deep data sets describing human behavior. To some extent, this is also a case of industry being appealing for people studying deployment of large deployments of computer systems — but it applies even to those who don’t care much about the “computer” part. In further parallels to the case with CS systems researchers, in industry they have talented database and machine learning experts ready to help, rather than social science grad students who are (like the faculty) too often afraid of math.

Not just predicting the present, but the future: Twitter and upcoming movies

Search queries have been used recently to “predict the present“, as Hal Varian has called it. Now some initial use of Twitter chatter to predict the future:

The chatter in Twitter can accurately predict the box-office revenues of upcoming movies weeks before they are released. In fact, Tweets can predict the performance of films better than market-based predictions, such as Hollywood Stock Exchange, which have been the best predictors to date. (Kevin Kelley)

Here is the paper by Asur and Huberman from HP Labs. Also see a similar use of online discussion forums.

But the obvious question from my previous post is, how much improvement do you get by adding more inputs to the model? That is, how does the combined Hollywood Stock Exchange and Twitter chatter model perform? The authors report adding the number of theaters the movie opens in to both models, but not combining them directly.

Search terms and the flu: preferring complex models

Simplicity has its draws. A simple model of some phenomena can be quick to understand and test. But with the resources we have today for theory building and prediction, it is worth recognizing that many phenomena of interest (e.g., in social sciences, epidemiology) are very, very complex. Using a more complex model can help. It’s great to try many simple models along the way — as scaffolding — but if you have a large enough N in an observational study, a larger model will likely be an improvement.

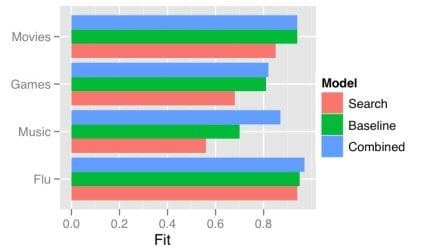

One obvious way a model gets more complex is by adding predictors. There has recently been a good deal of attention on using the frequency of search terms to predict important goings-on — like flu trends. Sharad Goel et al. (blog post, paper) temper the excitement a bit by demonstrating that simple models using other, existing public data sets outperform the search data. In some cases (music popularity, in particular), adding the search data to the model improves predictions: the more complex combined model can “explain” some of the variance not handled by the more basic non-search-data models.

This echos one big takeaway from the Netflix Prize competition: committees win. The top competitors were all large teams formed from smaller teams and their models were tuned combinations of several models. That is, the strategy is, take a bunch of complex models and combine them.

One way of doing this is just taking a weighted average of the predictions of several simpler models. This works quite well when your measure of the value of your model is root mean squared error (RMSE), since RMSE is convex.

While often the larger model “explains” more of the variance, what “explains” means here is just that the R-squared is larger: less of the variance is error. More complex models can be difficult to understand, just like the phenomena they model. We will continue to need better tools to understand, visualize, and evaluate our models as their complexity increases. I think the committee metaphor will be an interesting and practical one to apply in the many cases where the best we can do is use a weighted average of several simpler, pretty good models.

Advanced Soldier Sensor Information System and Technology

Yes, that spells ASSIST.

Check out this call for proposals from DARPA (also see Wired News). This research program is designed to create and evaluate systems that use sensors to capture soldiers’ experiences in the field, thus allowing for (spatially and temporally) distant review and analysis of this data, as well as augmenting their abilities while still in the field.

I found it interesting to consider differences in requirements between this program and others that would apply some similar technologies and involve similar interactions — but for other purposes. For example, two such uses are (1) everyday life recording for social sharing and memory and (2) rich data collection as part of ethnographic observation and participation.

When doing some observation myself, I strung my cameraphone around my neck and used Waymarkr to automatically capture a photo every minute or so. Check out the results from my visit to a flea market in San Francisco.

Photos of two ways to wear a cameraphone from Waymarkr. Incidentally, Waymarkr uses the cell-tower-based location API created for ZoneTag, a project I worked on at Yahoo! Research Berkeley.

Also, for a use more like (1) in a fashion context, see Blogging in Motion. This project (for Yahoo! Hack Day) created a “auto-blogging purse” that captures photos (again using ZoneTag) whenever the wearer moves around (sensed using GPS).