Search terms and the flu: preferring complex models

Simplicity has its draws. A simple model of some phenomena can be quick to understand and test. But with the resources we have today for theory building and prediction, it is worth recognizing that many phenomena of interest (e.g., in social sciences, epidemiology) are very, very complex. Using a more complex model can help. It’s great to try many simple models along the way — as scaffolding — but if you have a large enough N in an observational study, a larger model will likely be an improvement.

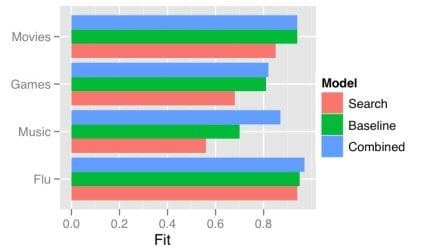

One obvious way a model gets more complex is by adding predictors. There has recently been a good deal of attention on using the frequency of search terms to predict important goings-on — like flu trends. Sharad Goel et al. (blog post, paper) temper the excitement a bit by demonstrating that simple models using other, existing public data sets outperform the search data. In some cases (music popularity, in particular), adding the search data to the model improves predictions: the more complex combined model can “explain” some of the variance not handled by the more basic non-search-data models.

This echos one big takeaway from the Netflix Prize competition: committees win. The top competitors were all large teams formed from smaller teams and their models were tuned combinations of several models. That is, the strategy is, take a bunch of complex models and combine them.

One way of doing this is just taking a weighted average of the predictions of several simpler models. This works quite well when your measure of the value of your model is root mean squared error (RMSE), since RMSE is convex.

While often the larger model “explains” more of the variance, what “explains” means here is just that the R-squared is larger: less of the variance is error. More complex models can be difficult to understand, just like the phenomena they model. We will continue to need better tools to understand, visualize, and evaluate our models as their complexity increases. I think the committee metaphor will be an interesting and practical one to apply in the many cases where the best we can do is use a weighted average of several simpler, pretty good models.

Using social networks for persuasion profiling

BusinessWeek has an exhuberant review of current industry research and product development related to understanding social networks using data from social network sites and other online communication such as email. It includes snippets from people doing very interesting social science research, like Duncan Watts, Cameron Marlow, and danah boyd. So it is worth checking out, even if you’re already familiar with the Facebook Data Team’s recent public reports (“Maintained Relationships”, “Gesundheit!”).

But I actually want to comment not on their comments, but on this section:

In an industry where the majority of ads go unclicked, even a small boost can make a big difference. One San Francisco advertising company, Rapleaf, carried out a friend-based campaign for a credit-card company that wanted to sell bank products to existing customers. Tailoring offers based on friends’ responses helped lift the average click rate from 0.9% to 2.7%. Although 97.3% of the people surfed past the ads, the click rate still tripled.

Rapleaf, which has harvested data from blogs, online forums, and social networks, says it follows the network behavior of 480 million people. It furnishes friendship data to help customers fine-tune their promotions. Its studies indicate borrowers are a better bet if their friends have higher credit ratings. This might mean a home buyer with a middling credit risk score of 550 should be treated as closer to 600 if most of his or her friends are in that range, says Rapleaf CEO Auren Hoffman.

The idea is that since you are more likely to behave like your friends, their behavior can be used to profile you and tailor some marketing to be more likely to result in compliance.

In the Persuasive Technology Lab at Stanford University, BJ Fogg has long emphasized how powerful and worrying personalization based on this kind of “persuasion profile” can be. Imagine that rather than just personalizing screens based on the books you are expected to like (a familiar idea), Amazon selects the kinds of influence strategies used based on a representation of what strategies work best against you: “Dean is a sucker for limited-time offers”, “Foot-in-the-door works really well against Domenico, especially when he is buying a gift.”

In 2006 two of our students, Fred Leach and Schuyler Kaye, created this goofy video illustrating approximately this concept:

My sense is that this kind of personalization is in wide use at places like Amazon, except that their “units of analysis/personalization” are individual tactics (e.g., Gold Box offers), rather than the social influence strategies that can be implemented in many ways and in combination with each other.

What’s interesting about the Rapleaf work described by BusinessWeek is that this enables persuasion profiling even before a service provider or marketer knows anything about you — except that you were referred by or are otherwise connected to a person. This gives them the ability to estimate your persuasion profile by using your social neighborhood, even if you haven’t disclosed this information about your social network.

While there has been some research on individual differences in responses to influence strategies (including when used by computers), as far as I know there isn’t much work on just how much the responses of friends covary. As a tool for influencers online, it doesn’t matter as much whether this variation explained by friends’ responses is also explained by other variables, as long as those variables aren’t available for the influencers to collect. But for us social scientists, it would be interesting to understand the mechanism by which there is this relationship: is it just that friends are likely to be similar in a bunch of ways and these predict our “persuasion profiles”, or are the processes of relationship creation that directly involve these similarities.

This is an exciting and scary direction, and I want to learn more about it.

Expert users: agreement in focus from two threads of human-computer interaction research

Much of current human-computer interaction (HCI) research focuses on novice users in “walk-up and use” scenarios. I can think of three major causes for this:

- A general shift from examining non-discretionary use to discretionary use

- How much easier it is to find (and not train) study participants unfamiliar with a system than experts (especially with a system that is only a prototype)

- The push from practitioners in the direction, especially with the advent of the Web, where new users just show up at your site, often deep-linked

This focus sometimes comes in for criticism, especially when #2 is taken as a main cause of the choice.

On the other hand, some research threads in HCI continue to focus on expert use. As I’ve been reading a lot of research on both human performance modeling and situated & embodied approaches to HCI, it has been interesting to note that both instead have (comparatively) a much bigger focus on the performance and experience of expert and skilled use.

Grudin’s “Three Faces of Human-Computer Interaction” does a good job of explaining the human performance modeling (HPM) side of this. HPM owes a lot to human factors historically, and while The Psychology of Human-Computer Interaction successfully brought engineering-oriented cognitive psychology to the field, it was human factors, said Stuart Card, “that we were trying to improve” (Grudin 2005, p. 7). And the focus of human factors, which arose from maximizing productivity in industrial settings like factories, has been non-discretionary use. Fundamentally, it is hard for HPM to exist without a focus on expert use because many of the differences — and thus research contributions through new interaction techniques — can only be identified and are only important for use by experts or at least trained users. Grudin notes:

A leading modeler discouraged publication of a 1984 study of a repetitive task that showed people preferred a pleasant but slower interaction technique—a result significant for discretionary use, but not for modeling aimed at maximizing performance.

Situated action and embodied interaction approaches to HCI, which Harrison, Tatar, and Senger (2007) have called the “third paradigm of HCI”, are a bit different story. While HPM research, like a good amount in traditional cognitive science generally, contributes to science and design by assimilating people to information processors with actuators, situated and embodied interaction research borrows a fundamental concern of ethnomethodology, focusing on how people actively make behaviors intelligible by assimilating them to social and rational action.

There are at least three ways this motivates the study of skilled and expert users:

- Along with this research topic comes a methodological concern for studying behavior in context with the people who really do it. For example, to study publishing systems and technology, the existing practices of people working in such a setting of interest are of critical importance.

- These approaches emphasize the skills we all have and the value of drawing on them for design. For example, Dourish (2001) emphasizes the skills with which we all navigate the physical and social world as a resource for design. This is not unrelated to the first way.

- These approaches, like and through their relationships to the participatory design movement, have a political, social, and ethical interest in empowering those who will be impacted by technology, especially when otherwise its design — and the decision to adopt it — would be out of their control. Non-discretionary use in institutions is the paradigm prompting situation for this.

I don’t have a broad conclusion to make. Rather, I just find it of note and interesting that these two very different threads in HCI research stand out from much other work as similar in this regard. Some of my current research is connecting these two threads, so expect more on their relationship.

References

Dourish, P. (2001). Where the Action Is: The Foundations of Embodied Interaction. MIT Press.

Grudin, J. (2005). Three Faces of Human-Computer Interaction. IEEE Ann. Hist. Comput. 27, 4 (Oct. 2005), 46-62.

Harrison, S., Tatar, D., and Senger, P. (2007). The Three Paradigms of HCI. Extended Abstracts CHI 2007.

Riskful decisions and riskful thinking: Donald Davidson and Cliff Nass

Two personal-professional narratives that I’ve been somewhat familiar with for a while have recently highlighted for me the significance of riskful decisions and thinking in academia. I think the stories are interesting on their own, but they also emphasize some questions and concerns for the functioning of scholarly inquiry.

The first is about the American philosopher Donald Davidson, whose work has long been of great interest to me (and was the topic of my undergraduate Honors thesis). The second is about Cliff Nass (Clifford Nass), Professor of Communication at Stanford, an advisor and collaborator. The major published source I draw on for each of these narratives is an interview: for Davidson’s story, it is an interview by Ernest Lepore (2004), a critic and expositor of Davidson’s philosophy; for Cliff Nass, it is an interview by Tamara Adlin (2007). After sharing these stories, I’ll discuss some similarities and briefly discuss risk-taking in decisions and thinking.

Donald Davidson is considered one of the most important and influential philosophers of the past 60 years, and he is my personal favorite. Davidson is often described as a highly systematic philosopher — uncharacteristically so for 20th century philosophy, in that his contributions to several areas of philosophy (philosophy of language, mind, and action, semantics, and epistemology) are deeply connected in their method and the proposed theories. He is the paradigmatic programmatic philosopher of the 20th century.

Despite this, Davidson’s philosophical program did not emerge until relatively late in his career. The same is true of his publications in general. Only after accepting a tenure track position at Stanford in 1951 (which was then still up-and-coming, though quickly, in philosophy) did he begin to publish (nothing was even in the “pipeline” previous to this). This began under the wing of the younger Patrick Suppes, with whom Davidson co-authored a book (1957) on decision theory. His first philosophical article appears in 1963 (which he authored alone only through an unexpected death). As Davidson puts it in an interview with Ernest Lepore, “I was very inhibited so far as publication was concerned” and was worried “that the minute I actually published something, everyone was going to jump on me” (Davidson 2004).

Then Davidson published “Actions, Reasons and Causes” (1963), twelve years after joining the Stanford faculty. It argues against the late-Wittgensteinian dogma that reasons are not also causes. It is only with this paper that there was a publication by Davidson that drew significant attention from the community (beginning with a presentation of the paper at a meeting of the American Philosophical Association). This paper has been hugely influential and alone identified Davidson as an important thinker in the field, though he was surprised the reception was not as overwhelming as he had thought: “I didn’t realize that if you publish, as far as I can tell, no one was going to pay any attention.” Many responses, both positive and critical, did eventually come, and Davidson went on to publish many highly influential papers, reaching the height of his immense scholarly influence in the 1970s and 1980s.

Clifford Nass is widely known researcher in the psychology of human-computer interaction (HCI). With Byron Reeves, he wrote The Media Equation (1996), which presents research carried out at Stanford University on how people respond in mediated interactions (e.g. with computers and televisions) by overextending social rules normally applied to other people. This hints at the (here simplified) straight, bold line of Nass’s research program: take a finding from social psychology, replace the second human with a computer, see if you get the same results. This exact strategy has been modified and expanded from, but the general consistency of Nass’s program over many years is striking for HCI: unlike in psychology, for example, in HCI there are many investigators seeking low-hanging fruit and quickly moving on to new projects.

Nass likes to refer to his “accidental PhD”, as he hadn’t intended to get a PhD in sociology. After working for a year at Intel, he was planning to matriculate in a electrical engineering PhD program, but an unexpected death postponed that. “[J]ust to bide my time and to have some flexibility, I ended up doing a sociology degree,” says Nass. He did his dissertation on the role of pre-processing jobs in labor, taking an approach that was radical in its elimination of a role for people and that connected with contemporary research by social science outsiders doing “sociocybernetics”. With such a dissertation topic (and the dissertation itself unfinished), finding a job did not seem easy at the outset: “It’s a nutty topic. I was going to be in trouble getting jobs. I had published stuff and was doing work and all that, but my dissertation was so weird” (Adlin 2007).

There was, however, a bit of luck, well taken advantage of by Nass: the Stanford Communication Department was under construction and looking to hire some folks doing weird work. So when Nass interviewed, impressing both them and the Sociology Department, he got the job, despite knowing nothing about Communication as a discipline and having been to no conferences in the field. After beginning at Stanford, Nass was seeking a research program, as clearly there was something wrong, at least when it came to getting it accepted for academic publication, with his previous work: “I was having a terrible time getting my work accepted. In fact, to this day I’ve still never published anything off my dissertation, 20-odd years later. Because again, no field could figure out who owned the material. I got reviews like, ‘This work is offensive.‘”

But Nass couldn’t settle on any normal research program. He wanted to examine how people might treat computers socially. Getting funding for this work wouldn’t have been easy, but he got a grant that the grant administrator described as the 1 of 35 given that they chose to give to the “weirdest project that was proposed”. It wasn’t all easy from there, of course. For example, it took some time to design and carry out successful experiments in this program — and even longer to get the results published. But this risk-taking in distributing this grant helped enable the work to continue.

Cliff Nass is very clear about the role riskful decisions, in admissions, hiring, and funding, played in his success:

I was very lucky. I fear that those times are gone. I really do fear to a tremendous degree that the risk-taking these people were willing to do for me, to give me an opportunity, are gone. I try to remember that. […]

I benefited from the willingness of people to say, “We’re just going to roll the dice here.”

Of course, it isn’t just Cliff who got lucky; in a big sense we all did. His work has been an important influence in HCI and has contributed to our stores of both generalizable knowledge and new lenses for approaching how we get on in the world.

What does it mean for academic research, and science generally, if this choice and ability to take these risks evaporates? There is incredible competition for academic positions now, more so in some fields than others. And the best tool in getting a job is a whole list of publications accepted in important, mainstream journals in the field. There is a lot written about the competition for academic jobs and criteria for wading through applicants to sometimes a safe option. There are case studies of families of disciplines; for example, a study of the biosciences argues that market forces are failing to create sufficient job prospects for young investigators (Freeman et al. 2001).

I won’t review them all here. Instead I suggest an article for general readers from The New York Times about state and regional colleges’ use of non-tenure track positions, which has an impact of the institutions’ bottom line and flexibility (Finder 2007). This is part of a wider trend in how tenure is used that also impacts the academic freedom and resources that scholars have to pursue new research (Richardson 1999).

Enabling riskful thinking

Hans Ulrich Gumbrecht argues that “riskful thinking” is central to the value of the humanities and arts in academia. He defines riskful thinking as investigation that can’t be expected to produce results interpretable as easy answers, but that instead is likely to produce or highlight complex and confusing phenomena and problems. But I think that this is more broadly true. Riskful thinking is critical to interdisciplinary and pre-paradigmatic sciences, or disciplines long doing normal science but in need of a shake-up. These are situations where compelling phenomena can become paradigmatic cases for study and powerful vocabularies can allow formulating new problems and theories.

What threatens riskful thinking, and how can we enable it? What is so great about riskful thinking anyway, and what makes some riskful thinking so successful, while much of it is likely to fail? At Nokia Research Center in Palo Alto, our lab head John Shen champions the importance of risk taking in industry research, but also argues that risk-taking is often misunderstood and that it is only some kinds of risk-taking that are most important to cultivate in industry research.

—

Finally, a list of Davidson–Nass similarities, just for fun:

- Both were hired to tenure track positions at Stanford, where they first did and published highly influential work

- Both are easily and widely seen as highly programmatic, having defined a clear research program challenging to currently popular approaches and beliefs in their fields

- Both had great difficulty finding early, publishable success with their research programs, even after ceasing their early work (Davidson: Plato, empirical decision theory; Nass: information processing models of the labor force)

- Both had other draws and distractions (Davidson: business school, teaching plane identification in WWII; Nass: being a professional magician, working at Intel)

- Both produced dissertations viewed by others in the discipline as odd (Davison: Quine “was a little mystified by my writing on this. He never talked to me about it.”; Nass: “my PhD thesis was so bizarre”)

References

Finder, A. (2007, November 20). Decline of the Tenure Track Raises Concerns. The New York Times.

Freeman, R., Weinstein, E., Marincola, E., Rosenbaum, J., & Solomon, F. (2001). Careers: Competition and Careers in Biosciences. Science, 294(5550), 2293-2294.

Lepore, E. (2004). Interview with Donald Davidson. In Problems of Rationality, Oxford University Press, 2004, pp. 231-266.

Nass, C., Steuer, J., & Tauber, E. R. (1994). Computers are social actors. In Proc. of CHI 1994. ACM Press.

Reeves, B., & Nass, C. (1996). The media equation: how people treat computers, television, and new media like real people and places. Cambridge University Press.

Richardson, J. T. (1999). Tenure in the New Millenium. National Forum, 79(1), 19-23.

Sanford, J. (2000, November 17). ‘Elementary pleasures’ and ‘riskful thinking’ matter to Gumbrecht. Stanford Report.